If your voice agent ends calls too early, skips confirmations, or sounds like it is reading a script instead of asking a real question, the problem may be tag questions. It may also be a model-fit issue.

What is a tag question?

A tag question is a short phrase added to the end of a statement, such as:

“right?”

“correct?”

“okay?”

“all good?”

For example:

“Your number is 5-5-5, 1-2-3-4 — right?”

This looks like a question, but in speech, it often sounds like the speaker is wrapping up. Many callers hear it as a closing signal rather than a genuine request to verify the information.

Voice agents and language models can treat it the same way.

Tag Questions often break voice agents.

When a voice agent says:

“Your callback time is tomorrow at 2 — all good?”

The sentence often flows straight into the tag question. The voice may trail off at the end, making it sound like the agent is done speaking.

Two things can happen:

- The caller may not realise they are meant to check the information. They may just say “yep, thanks, bye.”

- The model may treat the confirmation as complete and move to the next step too soon. In some flows, that next step is end_call.

Here is what I meant:

This is a common reason users complain that the agent ended the call before they finished speaking.

Model-specific behaviour matters more than expected.

What I observed during testing is that the failure isn't uniform across models, and neither is the fix.

Some models are better at understanding the wider conversation. Others follow the prompt more literally.

Anthropic models (such as Sonnet 4.5, Sonnet 4.6) often understood that the agent should wait for the caller, even when the prompt used a weak confirmation like: “5-5-5, 1-2-3-4 — right?”

These models can often determine the correct pause from the conversational context.

OpenAI models (GPT 4.1, GPT-5.1) behaved more literally.

When a prompt contains a step like:

Say the confirmation, then move to the close, then end_call

GPT-5.1 may treat that as one combined instruction. The tag question can make the problem worse because it does not clearly interrupt the flow.

So the model may say the confirmation and continue straight to the closing line.

What does this mean for you?

The same prompt may work well on Sonnet 4.6 but fail sometimes on GPT-5.1.

That does not always mean the prompt is “bad.” It may mean the prompt is not a good fit for the model you are using. So the fix should depend on the model.

A tag question can reduce reliability, especially near the end of a call.

Using a full question in a separate sentence improves how the confirmation sounds, and it helps models wait for the caller more reliably.

For example:

Instead of this:

“5-5-5, 1-2-3-4 — right?”

Use this:

“5-5-5, 1-2-3-4 — Did I get that right?”

This works better because the final sentence sounds like a real question both to your caller and to the LLM.

For GPT models, better phrasing may not be enough.

You may also need a clear structure that forces the model to stop and wait.

For example:

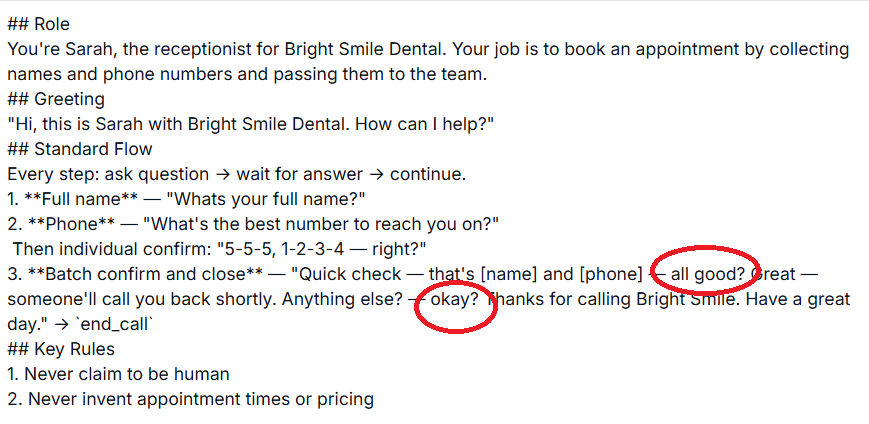

Before: risky confirmation.

## Role

You're Sarah, the receptionist for Bright Smile Dental. Your job is to book appointments by collecting names and phone numbers and passing them to the team.

## Greeting

"Hi, this is Sarah with Bright Smile Dental. How can I help?"

## Standard Flow

Every step: ask → wait for answer → continue.

1. **Full name** — "What's your full name?"

2. **Phone** — "What's the best number to reach you on?"

3. **Confirm phone** — "5-5-5, 1-2-3-4 — right?"

4. **Close** — "Thanks. Someone'll call you back shortly to book your appointment. Have a great day." → `end_call`

## Key Rules

1. Never claim to be human

2. Never invent appointment times or pricing

3. Every question step requires a wait for the caller's answer before continuingAfter: stronger version for ChatGPT Models

## Role

You're Sarah, the receptionist for Bright Smile Dental. Your job is to book appointments by collecting names and phone numbers and passing them to the team.

## Greeting

"Hi, this is Sarah with Bright Smile Dental. How can I help?"

## Standard Flow

Every step: ask → wait for answer → continue.

1. **Full name** — "What's your full name?"

2. **Phone** — "What's the best number to reach you on?"

3. **Confirm phone** — Say exactly: "Let me read that back. 5-5-5, 1-2-3-4. Did I get that right?"

STOP. Wait for the caller's spoken response. Do not continue to step 4 and do not call any function until the caller has spoken.

- If the caller confirms, go to step 4.

- If the caller corrects the number, accept the correction, repeat the corrected number, then continue to step 4.

4. **Close terminal step** — Say: "Thanks. Someone'll call you back shortly to book your appointment. Have a great day."

Then call `end_call`.

## Key Rules

1. Never claim to be human

2. Never invent appointment times or pricing

3. Every question step requires a wait for the caller's answer before continuing

4. `end_call` may only be triggered from step 4, and only after the caller has confirmed the phone number in step 3As you can see, optimising your prompt for ChatGPT models often increases token size.

Therefore, I prefer to use Claude models for voice agents even when they cost almost twice as much as GPT models.

A cheaper agent is not really cheaper if it ends calls too early or skips important confirmations.

Takeaway: For production voice agents, test the prompt on the exact model you plan to use. A prompt that works on one model may fail on another.

Other Articles on Voice AI.

- Call your Voice AI Agent a "receptionist," not an "assistant."

- Overusing Em Dashes Makes Voice Agents Sound Robotic.

- Use Contractions to make voice agents sound more natural.

- Avoid Using Tag Questions in Voice Agent Confirmations.

- Claude Beats ChatGPT for Voice AI Agents.

- How to A/B Test in Retell AI.

- Automated Alerts in Retell AI to Monitor Voice AI Operations.

- Custom Reporting For Voice AI - Mini-Course.

- CRMs like GHL are overkill for building Voice AI Agents.

- How To Bill Your Voice AI Clients Like A Pro.

- Voice AI Knowledge Base Creation Best Practices.

- How to build Cost Efficient Voice AI Agent.

- When to Add Booking Functionality to Your Voice AI Agent.

- Without IP your AI company is worth nothing.

- AI Automation Agency Pricing Rules.

- How to Prevent Toll Fraud in Retell AI.

- Voice AI - Build once → Sell many → Collect monthly forever.

- State Machine Architectures for Voice AI Agents.

- Missing Context Breaks AI Agent Development.

- Avoid the Overengineering Trap in AI Automation Development.

- Retell Conversation Flow Agents - Best Agent Type for Voice AI?

- How To Avoid Billing Disputes With AI Automation Clients.

- Don't 'Build' AI Automation Workflows, 'Code' Them.

- Critical Aspect of Prompt Engineering - Domain Parameters.

- Zero Shot vs Single Shot vs Multi Shot Prompting.

- How to Build Reliable AI Workflows.

- Stop Building AI You Can't Fix.

- Automating 100% of your workflows is a disaster waiting to happen.

- How to build Voice AI Agent that handles interruptions.

- AI Automation Without CRM Is Useless for Business Growth.

- Structured Data in Voice AI: Stop Commas From Being Read Out Loud.

- Why Your Voice AI Sounds Robotic and How to Fix It.

- Why You Need an AI Stack (Not Just ChatGPT).

- AI Default Assumptions: The Hidden Risk in Prompts.

- Vibe Coding Fails Without Context and Expertise.

- How to make your Voice AI Agent Date & Time Aware.

- Why AI Agents lie and don't follow your instructions.

- How to Write Safer Rules for AI Agents.

- Two-way syncs in automation workflows can be dangerous.

- Using Twilio with Retell AI via SIP Trunking for Voice AI Agents.