BigQuery cost optimization refers to the process of minimizing unnecessary expenditures while still meeting the performance and data analysis requirements.

Contrary to popular belief, Google BigQuery is a cost-effective solution. However, it can get expensive pretty fast in the hands of a rookie.

Factors which determine BigQuery Cost for your company

Your monthly cost of using BigQuery depends upon the following factors (but is not limited to):

#1 The amount of data you stored in BigQuery (i.e. the data storage cost)

#2 The amount of data you processed by each query you run (i.e. the data processing cost).

#3 The amount of data you transfer in and out of BigQuery (i.e. the data transfer cost).

#4 The cost associated with the type of BigQuery Edition (‘Standard’, ‘Enterprise’, ‘Dedicated’) you use (i.e. the BigQuery edition cost).

The initial 10 GB of active storage each month is free. Beyond that, you will incur a fee of $0.020 per GB for active storage.

Each month, the first 1 terabyte of processed data is also included in the free tier. Any additional processed data will be billed at $5 per terabyte (TB).

As long as you stay within the 10 GB storage and one terabyte query limit per month, there will be no charges to your credit card.

Your credit card will be charged only when you exceed these free limits.

Suppose you begin querying terabytes or petabytes of data daily in BigQuery. In that case, be prepared for substantial monthly data storage and/or data processing fees.

Prerequisites for BigQuery Cost Optimization

Understanding the following terms in the context of Google BigQuery can help you make informed decisions about managing your data and optimizing storage costs:

- Storage Units

- Number of rows

- Number of partitions

- Logical bytes vs Physical bytes

- Active bytes vs Long term bytes

- Total Logical bytes.

- Active logical bytes.

- Long term logical bytes.

- Total Physical bytes.

- Active physical bytes.

- Long term physical bytes.

- Time travel physical bytes.

#1 Storage Units

The storage units are used to measure the amount of data that can be stored on a device or storage media.

Storage units can be defined as decimal units of measurement or binary units of measurement.

Decimal units are based on the decimal system, which uses base 10.

The following are the most common decimal storage units:

- Bit (b) is a binary digit, meaning it can be either a 0 or a 1. It is the smallest unit of data storage.

- Byte (B) is a group of 8 bits. One byte can represent a single character or a small piece of data.

- Kilobyte (KB) is equal to 1,000 bytes.

- Megabyte (MB) is equal to 1,000 kilobytes.

- Gigabyte (GB) is equal to 1,000 megabytes.

- Terabyte (TB) is equal to 1,000 gigabytes.

- Petabyte (PB) is equal to 1,000 terabytes.

Binary units are based on the binary system, which uses base 2.

The following are the most common binary storage units:

- Kibibyte (KiB): A kibibyte is equal to 1,024 bytes.

- Mebibyte (MiB): A mebibyte is equal to 1,024 kibibytes.

- Gibibyte (GiB): A gibibyte is equal to 1,024 mebibytes.

- Tebibyte (TiB): A tebibyte is equal to 1,024 tebibytes.

- Pebibyte (PiB): A pebibyte is equal to 1,024 tebibytes.

To summarize:

1 KB (kilobyte) = 1,000 bytes (decimal)

1 KiB (kibibyte) = 1,024 bytes (binary)

This means that a KiB is about 2.4% larger than a KB.

What is the purpose of using decimal and binary storage units?

The main difference between decimal and binary storage units is that binary storage units are more precise.

Therefore they are better suited for estimating the monthly cost of data storage and processing.

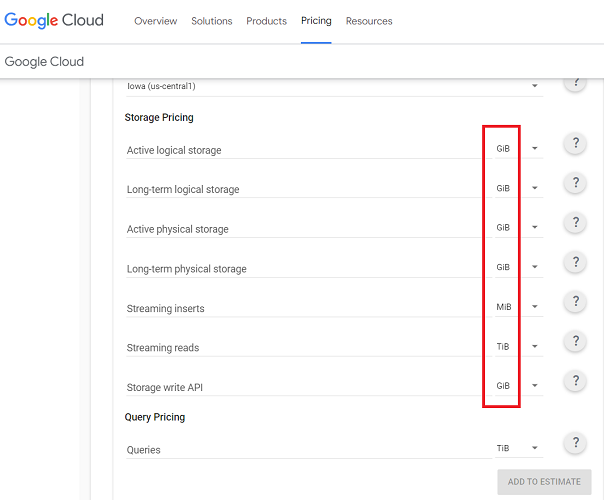

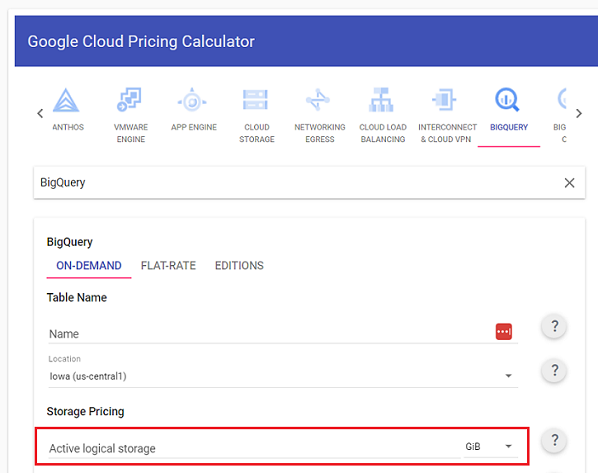

That’s why when you use the Google Cloud pricing calculator, all the storage units are defined using binary units of measurement:

The decimal storage units are most commonly used for displaying storage information to end users.

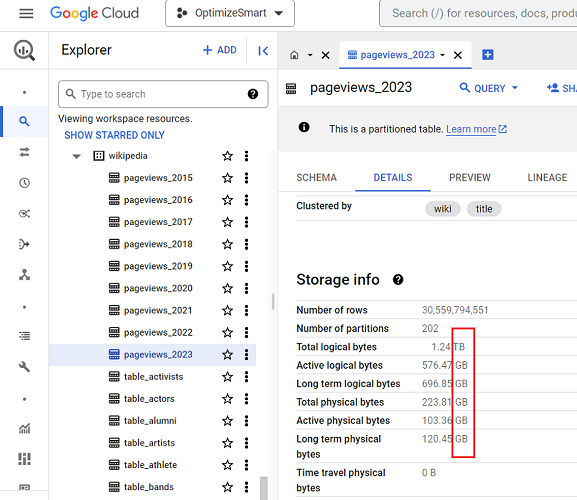

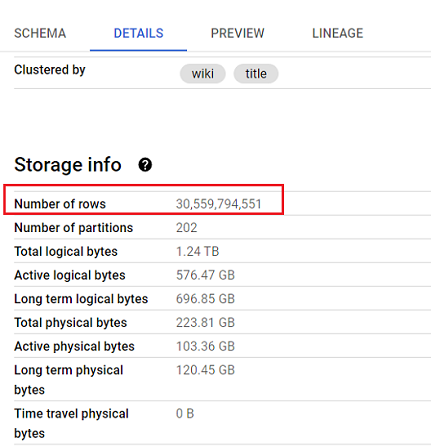

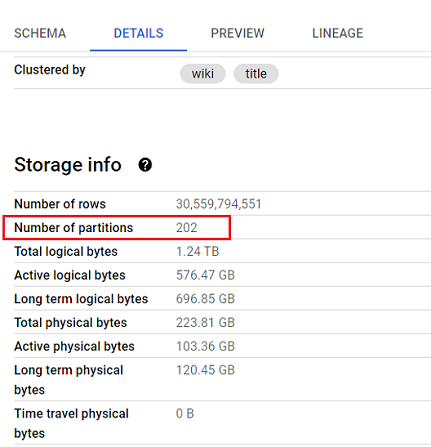

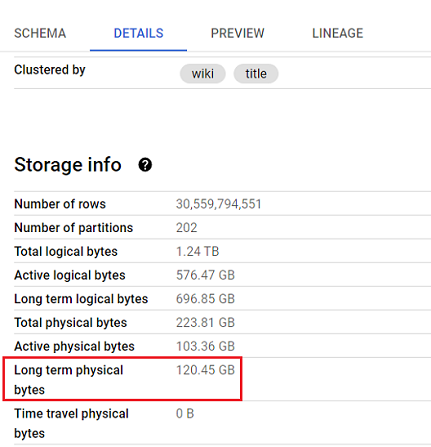

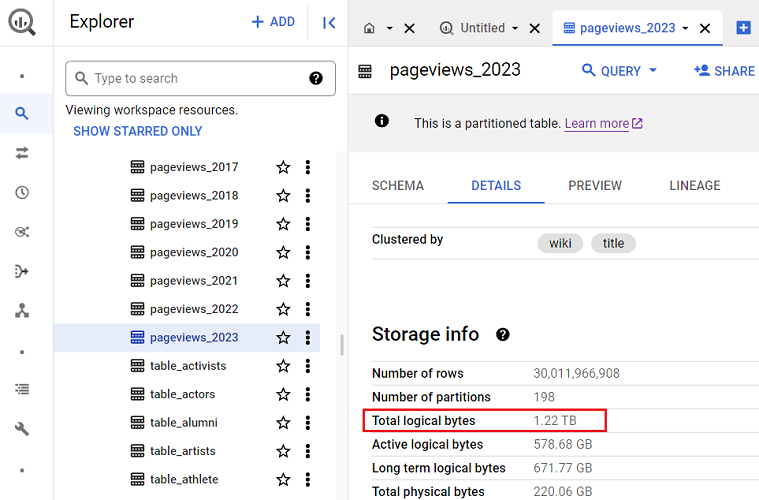

For example, the storage information displayed for a BigQuery data table uses the decimal storage units:

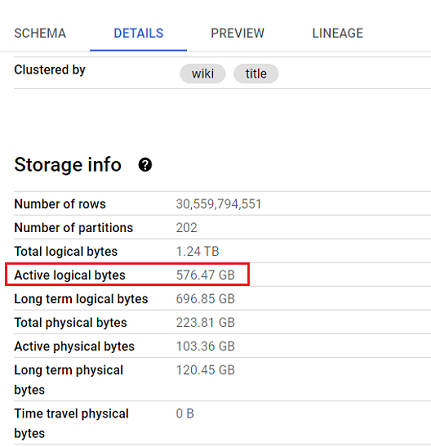

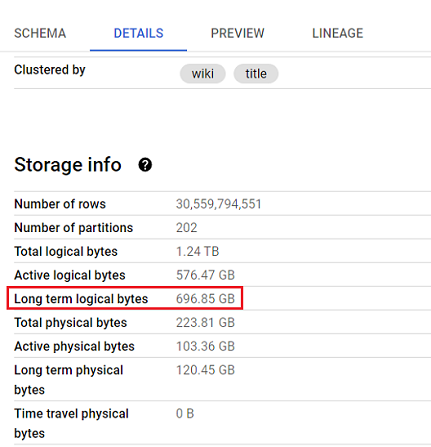

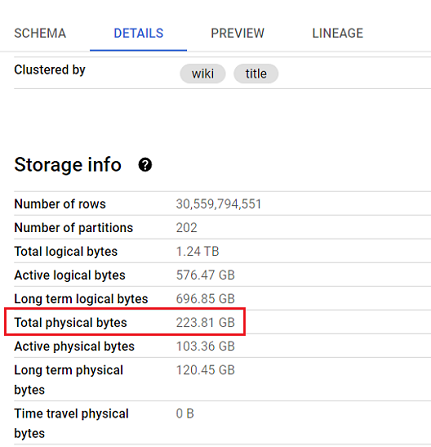

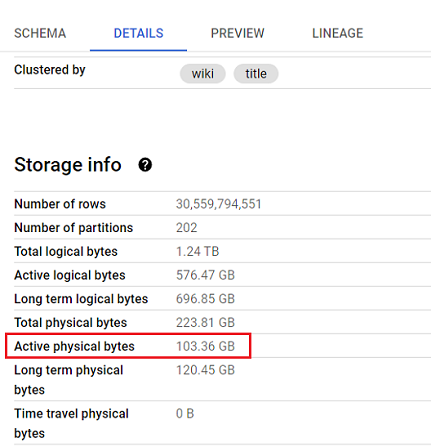

#2 Number of rows

In Google BigQuery, when you view the details of a data table, it shows the number of rows under the storage information:

The number of rows (or records) is shown because it gives users an immediate understanding of the volume of data contained within the data table.

A table with a large number of rows can take longer to query than a table with a small number of rows.

Thus the number of rows in a data table can affect the performance of queries on the table.

For these reasons, BigQuery data tables show the number of rows under the storage information.

#3 Number of partitions

In Google BigQuery, when you view the details of a data table, it can show the number of partitions under the storage information:

BigQuery data tables show the number of partitions under the storage information because the number of partitions in a table can affect the performance of queries on the table and the storage cost.

Partitions in BigQuery are a way of dividing a data table into smaller, logical units based on a specified partition key.

The partitioning keys should be columns that are frequently used in queries.

This division helps reduce storage costs and improve query performance, as it allows BigQuery to efficiently narrow its focus to only the relevant partitions when processing a query.

For example, GA4 BigQuery data tables are already partitioned by day.

This creates a separate partition for each day of data and makes it much easier to find the data for a specific day, as BigQuery would only need to search the partition for that day.

The partitioning of GA4 BigQuery data tables is a great example of how partitioning can be used to improve the performance of queries.

Important factors when partitioning a BigQuery data table

Keep the following factors in mind when you are considering partitioning a BigQuery data table:

#1 The number of partitions you create will affect the performance of queries. Too many partitions can make queries slower, while too few partitions can make queries less efficient.

#2 The size of the partitions also affects the performance of queries. Too large partitions can make queries slower, while too small partitions can make queries less efficient.

#3 If you have a table that is frequently queried by date, you should partition the table by date (time-based partitioning).

#4 If you have a table that is frequently queried by a specific column, you should partition the table by that column (column-based partitioning).

#5 If you have a table that is growing rapidly, you should partition the table to improve the performance of queries and reduce storage costs.

#4 Logical bytes vs Physical bytes

The Logical Bytes represent the uncompressed size of the data table.

It refers to the amount of data processed when running a query without any data pruning or optimizations (like filtering, partition elimination, or clustering).

The logical bytes help estimate the potential cost of querying the entire dataset without any optimization.

In contrast, Physical Bytes represent the compressed size of the data table, reflecting the actual space occupied on the disk. This is the amount of space that is charged for by BigQuery.

#5 Active bytes vs Long term bytes

The Active bytes refer to the amount of data currently used by active queries, cached queries and other operations, such as loading data into the table or exporting data from the table.

The ‘Active bytes’ metrics are updated periodically.

The Active Bytes metric can help you understand how much storage is being used by your queries. This information can help optimize your queries to improve performance.

In contrast, the Long term bytes refer to the amount of data NOT currently used (by active queries, cached queries and other operations, such as loading data into the table or exporting data from the table) but that is still stored in the table.

The Long Term Bytes metrics can help you understand how much storage is being used by your table that is not currently being used.

This information can help optimize your storage costs by identifying tables that can be archived or deleted.

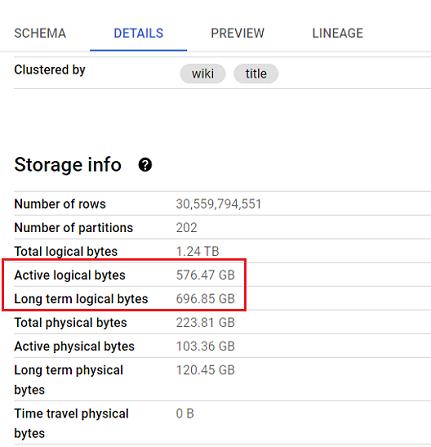

#6 Total Logical Bytes

The ‘Total Logical Bytes’ represents the total uncompressed size of the data table, including all the data contained within it.

The total logical bytes in a data table can be calculated by adding up the size of each column in the table.

The size of each column is determined by the type of data stored in the column and the length of the data.

For example, a column that stores text data will take up more space than a column that stores numeric data.

Similarly, a column that stores a long text string will take up more space than a column that stores a short text string.

#7 Active logical bytes

The ‘Active logical bytes’ refers to the amount of data currently used by active queries, cached queries and other operations, such as loading data into the table or exporting data from the table.

The ‘Active logical bytes’ metric is updated periodically.

The ‘Active logical bytes’ metric is different from the Total logical bytes metric in that it only reflects the data that is currently being used by the table.

On the other hand, the ‘Total logical bytes’ metric reflects the total amount of data stored in the table, regardless of whether or not the data is currently being used.

#8 Long term logical bytes

The ‘Long term logical bytes’ refers to the amount of data NOT currently used (by active queries, cached queries and other operations, such as loading data into the table or exporting data from the table) but that is still stored in the table.

The ‘Long term logical bytes’ metric is updated periodically.

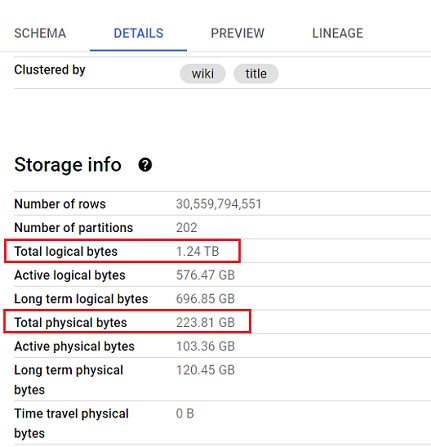

#9 Total Physical bytes

The ‘Total Physical Bytes’ represents the total compressed size of the data table, including all the data contained within it.

This is the amount of space that the table actually takes up on disk. This is the amount of space that is charged for by BigQuery.

The main difference between ‘total logical bytes’ and ‘total physical bytes’ metrics is that total logical bytes refer to the uncompressed size of the table, while total physical bytes refer to the compressed size of the table.

#10 Active physical bytes

The ‘Active physical bytes’ refers to the amount of compressed data currently used by active queries, cached queries and other operations, such as loading data into the table or exporting data from the table.

The ‘Active physical bytes’ metric is updated periodically.

The main difference between Active Logical Bytes and Active Physical Bytes is that Active Logical Bytes refer to the uncompressed size of the data currently being used by a table.

In contrast, Active Physical Bytes refer to the compressed size of the data currently being used by a table.

#11 Long term physical bytes

The ‘Long term physical bytes’ refers to the amount of compressed data NOT currently used by active queries, cached queries and other operations, such as loading data into the table or exporting data from the table.

The ‘Long term physical bytes’ metric is updated periodically.

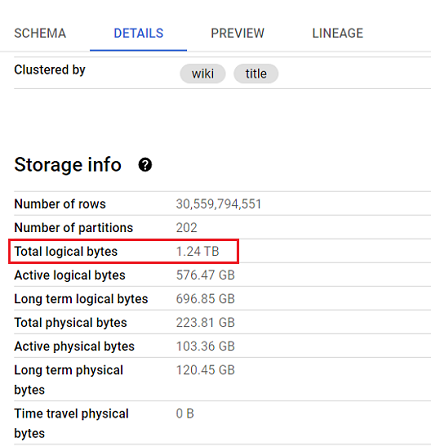

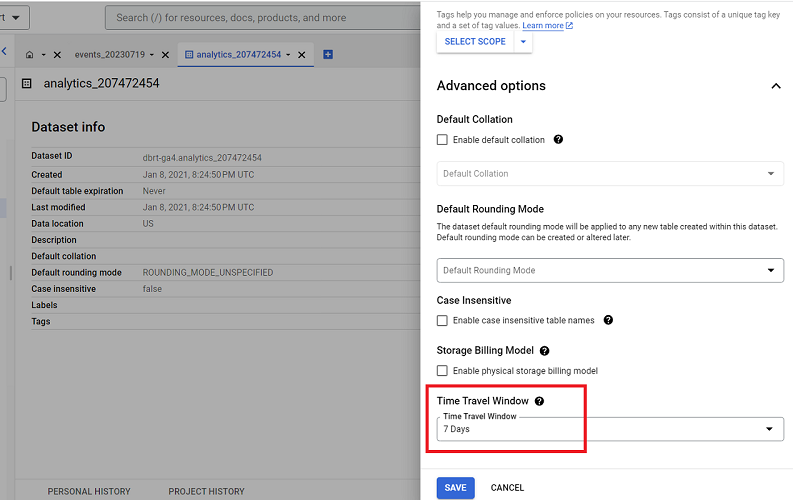

What is Time Travel in BigQuery

Time travel functionality in BigQuery allows you to perform various data recovery and historical analysis tasks.

It enables you to query data that has been updated or deleted, restore deleted tables, or access expired tables within a specific time travel window, which is typically the past seven days by default:

During this time travel period, any changes or deletions made to data in the dataset’s tables are retained in case you need to recover them.

To learn more about time travel, check out the official help documentation from Google: Data retention with time travel and fail-safe.

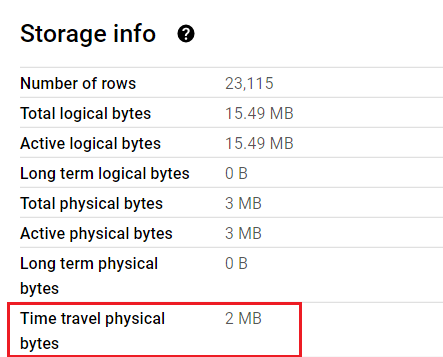

#12 Time travel physical bytes

The ‘Time travel physical bytes’ metric represents the storage size used for maintaining the historical data required for time travel functionality.

This metric is calculated by considering the compressed data stored for time travel purposes and the compression algorithm applied to compress this historical data.

The ‘Time travel physical bytes’ metric is updated periodically to provide accurate information about the storage requirements for time travel.

BigQuery operations that are free of charge

The following BigQuery operations are free of charge in any location:

- The first 10 GiB per month of storage is free.

- The first 1 TB of query data processed per month is free.

- Queries that result in an error are free of charge.

- Cached queries.

- Deleting tables, views, partitions, functions and datasets

For more details, refer to the official BigQuery pricing documentation.

BigQuery Cost Optimization best practices

The following are the best practices when it comes to reducing BigQuery costs:

- Practice data minimization.

- Avoid mindless data processing.

- Before you query the data from a table, check the size of the table.

- Before you query the data from a table, preview the table.

- Always look at how much data your query will process before you run your query.

- Your query cost depends on the number and/or size of the returned columns, not the rows.

- Your query cost is also affected by the size of each column.

- Avoid using SELECT *

- Applying a LIMIT clause to a SELECT * query does not affect the query cost.

- Set up Budget alerts.

- Set up Quota limits.

- Regularly monitor your spending.

- Use the Google Cloud pricing calculator.

- Transform BigQuery data before you send them to data platforms.

#1 Practice data minimization

Data minimization is the practice of collecting, storing and using only the personal data which you absolutely need for the purpose you have specified in your privacy policy.

Collecting unnecessary data about website users and customers can violate the General Data Protection Regulation (GDPR) rules.

Other than the privacy benefits, implementing data minimization techniques can help reduce the cost of using BigQuery.

When you minimize data collection, you only retain and process the essential information required for your data analysis or business operations.

By removing redundant or obsolete data, you can reduce the storage space needed in BigQuery, thereby lowering data storage costs.

Additionally, minimizing the volume of data being processed can decrease the amount of data scanned during queries, reducing query costs.

One of the biggest complaints I often hear about GA4 BigQuery usage is exceeding the daily BigQuery export limits.

These limits are good enough for some businesses to give up on GA4 completely.

That’s why you must evaluate your tracking requirements seriously.

Do not collect unnecessary event data, esp. at the expense of business-critical information.

Audit your GA4 property and find and remove events that are not business-critical information.

The best practice is to minimize the number of events you track so you don’t easily hit the BigQuery export limits.

#2 Avoid mindless data processing.

Mindless data processing is indiscriminate or excessive data processing without a clear objective.

If you regularly find yourself testing the limits of Google Sheets or MS Excel, you are most likely not ready for BigQuery.

Because that means you have the habit of mindlessly processing a large amount of data.

You do not have clearly defined data analysis objectives. You do not have clearly defined business questions.

Most people download a large chunk of data and then decide what to do with it. You can get away with this bad habit when using Google Sheets/Excel.

What’s the worst that could happen? Your application will freeze.

But what’s the worst that could happen when you bring your bad habit to BigQuery?

BigQuery will charge your company dearly for mindless data processing. You could end up paying hundreds or thousands of dollars to Google each month.

When you engage in mindful data processing, you carefully consider the data you query in BigQuery. You avoid unnecessary joins, aggregations, or excessive data transformations.

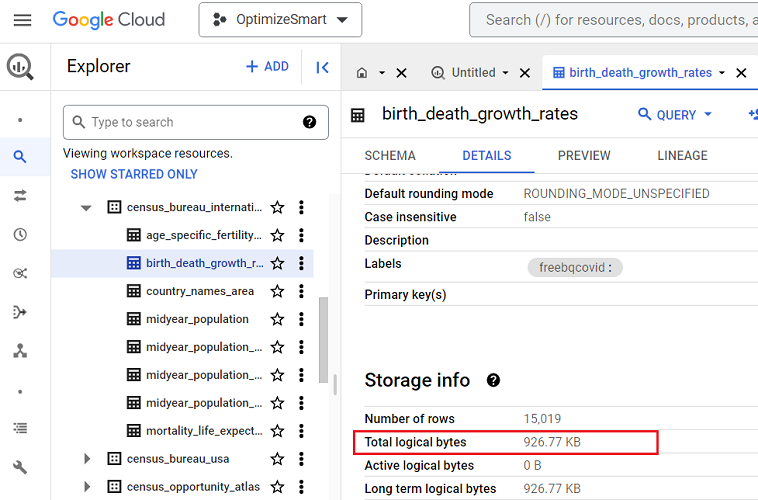

#3 Before you query the data from a table, check the size of the table.

In BigQuery, the table size refers to the total logical bytes occupied by the data stored in a table.

If the size of the data table is just a few kilobytes (KB) or megabytes (MB), you don’t need to worry.

But if the table size is in gigabytes (GB), terabytes (TB) or petabytes (PB), you should be careful how you query your data.

For example, you should be careful when querying the following data table as it is in terabytes:

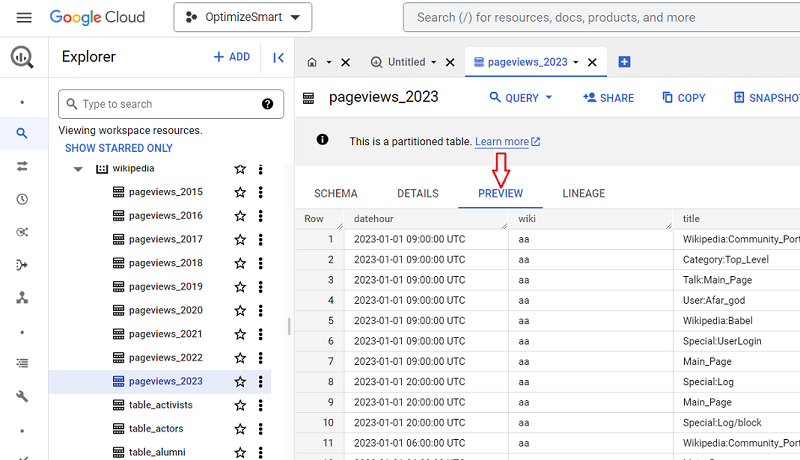

#4 Before you query the data from a table, preview the table

Many people, especially new users, run queries just to preview the data in a data table.

This could considerably cost you if you accidentally queried gigabytes or terabytes of data.

Instead of running queries just to preview the data in a data table, click on the ‘Preview’ tab to preview the table.

There is no cost for previewing the data table.

The table preview will give you an idea of what type of data is available in the table without querying the table.

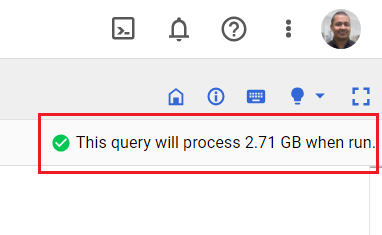

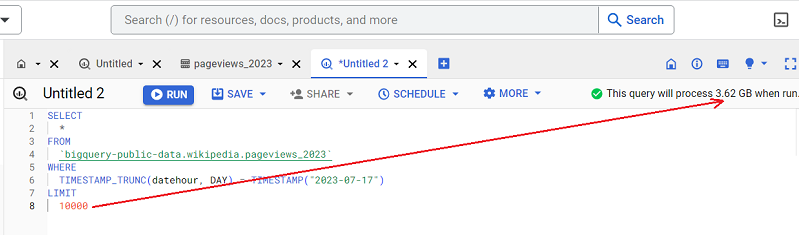

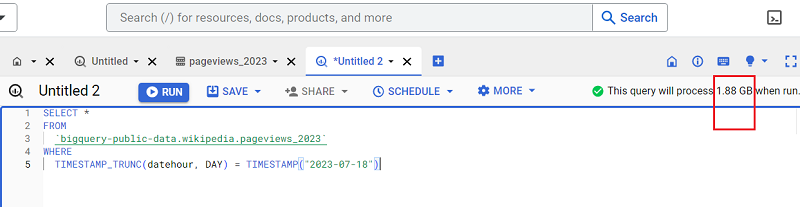

#5 Always look at how much data your query will process before you run your query.

If your query is going to process only kilobytes or megabytes of data, then you don’t need to worry.

However, if your query is going to process gigabytes or terabytes of data, it could considerably cost you:

If that’s the case, query only that data, which is absolutely necessary.

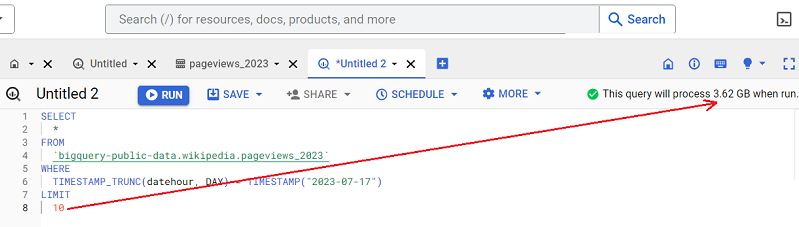

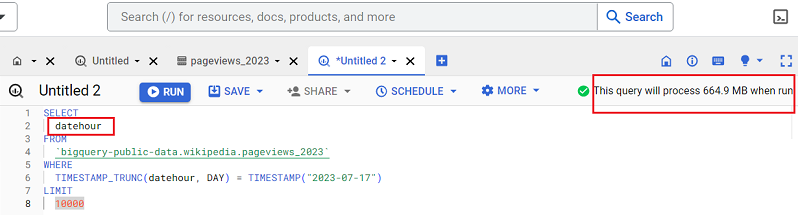

#6 Your query cost depends on the number and/or size of the returned columns, not the rows.

Returning 10 rows/records is going to cost you the same as returning 10,000 records of data:

The number of rows/records your query returns does not affect your query cost.

Your query cost is affected by the number of columns your query returns.

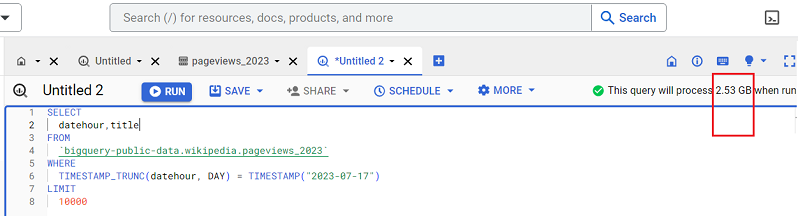

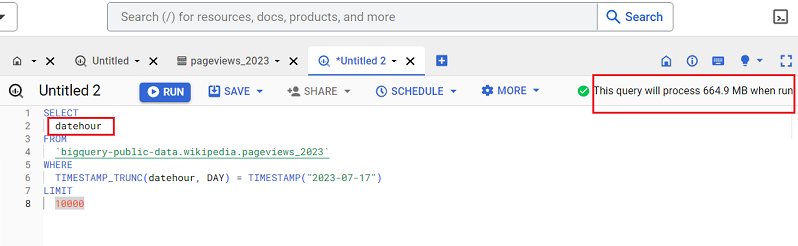

Following is an example of a query which would return one column named ‘datehour’:

Following is an example of a query which would return two columns named ‘datehour’ and ‘title’:

You can see from the screenshot how adding another column to the query increased the query size from 664.9 MB to a whopping 2.53 GB.

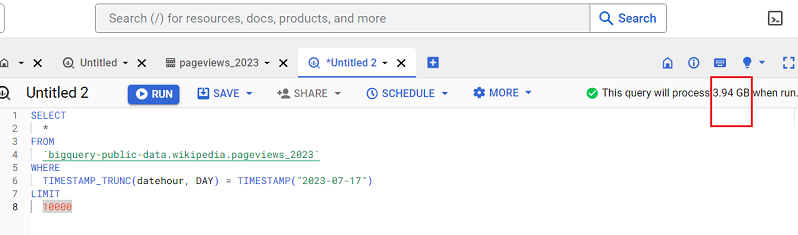

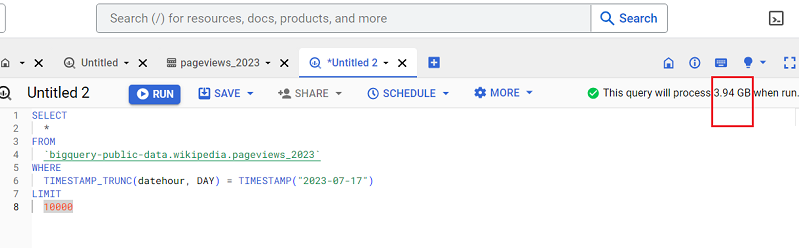

What would happen if we wrote a query that returns all the table columns?

So if we try to return all the columns of this data table, 3.94 GB of the data would be processed.

So only query the columns you really need.

#7 Your query cost is also affected by the size of each column.

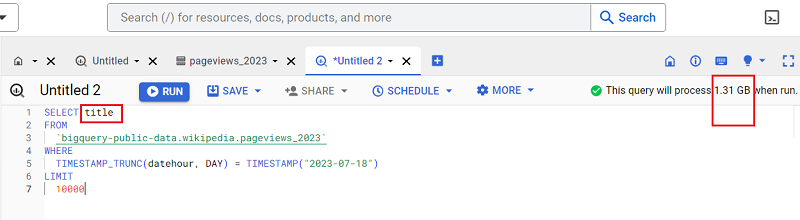

The query below returns one column named ‘datehour’:

The query below returns one column named ‘title’:

Note how the size of the query increased from 664.9 MB to 1.31 GB.

So you must be very careful about the size of the column you want to retrieve.

#8 Avoid using SELECT *

SELECT * means returns all the columns of the data table.

Now, if your data table contains a lot of columns and some of the columns are very big in size (maybe in GB or TB), using SELECT * could considerably increase your query cost.

So the best practice is to avoid using SELECT *

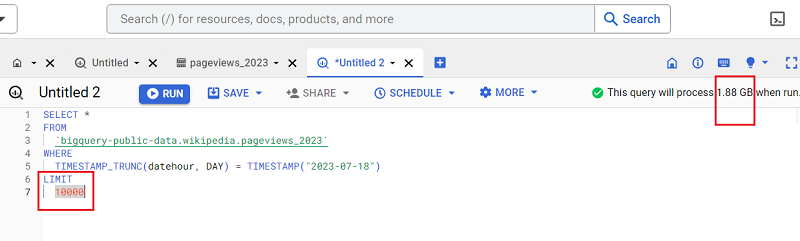

#9 Applying a LIMIT clause to a SELECT * query does not affect the query cost

This is because the LIMIT clause controls the number of rows/records your query returns.

But as you know by now, the number of rows/records your query returns doesn’t affect your query cost.

With the LIMIT clause:

Without the LIMIT clause:

#10 Set up Budget alerts

Set up cloud billing budgets and budget alerts which trigger email notifications to billing admins and/or project managers when your costs (actual costs or forecasted costs) exceed a percentage of your budget (based on the threshold rules you set).

These email alerts inform you of your usage costs trending over time.

Note: Setting up a budget does not automatically cap Google Cloud usage or spending.

For more information on setting up cloud billing budgets and budget alerts, check out the official help documentation from Google: https://cloud.google.com/billing/docs/how-to/budgets

#11 Set up Quota limits

You can turn on cost control at a project level or user level by setting up/customizing quota limits.

That way, you can cap the maximum number of bytes processed per day by a given user or project.

When the user/project exceeds their quota limit, the query will not be processed, and a “quota exceeded” error message will be displayed.

To learn more about working with Quotas, check out the official help documentation from Google: https://cloud.google.com/docs/quota

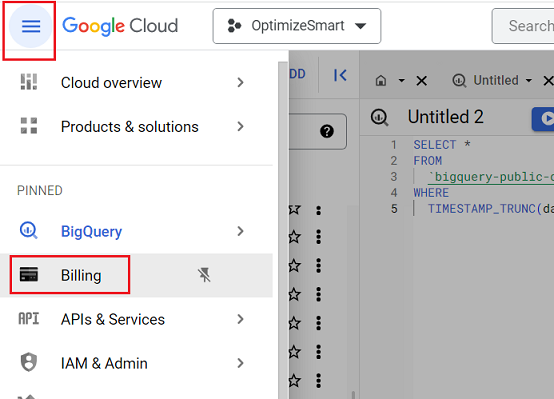

#12 Regularly monitor your spending

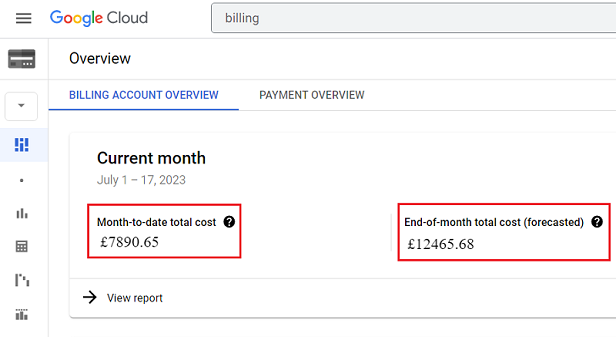

At least once a week, visit the ‘Billing‘ section of your Google Cloud Platform account to see how much you have spent so far:

#13 Use the Google Cloud Pricing Calculator

The Google Cloud pricing calculator estimates the monthly storage cost and/or cost of running your desired queries before you actually run them.

To learn more, check out this article: Using Google Cloud pricing calculator for BigQuery.

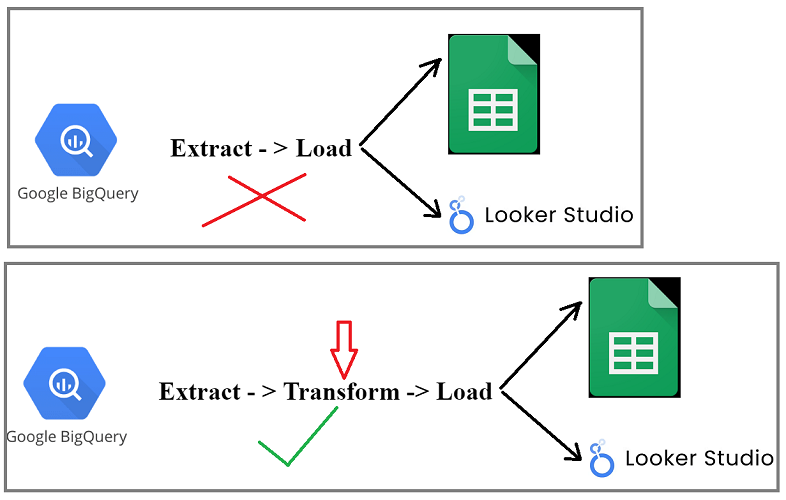

#14 Transform BigQuery data before you send them to data platforms.

One rookie mistake that many BigQuery users make is not transforming the data before sending it to other data platforms (like Google Sheets and Looker Studio).

This mistake could be costing them thousands of extra dollars each month in data processing costs.

It’s called ETL for a reason. Extract -> Transform -> Load and not Extract and Load.

Before you extract data from BigQuery, you should transform that data within BigQuery.

When you connect a data platform like Google Sheets/Looker Studio to a raw data table in your BigQuery project, you are most likely to run the dreaded SELECT * (retrieve all columns) SQL query in the background with every interaction with your data platform.

This is because the data platform does not know what columns you need, so it will just retrieve all of the columns.

This can be a problem if the raw data table is large, as it can result in a lot of data being transferred and processed.

It can also be a problem if you only need a subset of the columns, as you will still be paying for processing all of the columns.

To avoid this problem, you should transform the data before connecting it to the data platform.

This means removing unnecessary columns and rows and formatting the data in a way that the data platform can understand.

You can also partition the data, which will help reduce the amount of data needed for each query.

Suppose you connected your Looker studio report directly to your BigQuery raw data table.

Now every interaction with your report could result in you querying the entire raw data table in BigQuery.

If the raw data table is in GB or TB, you could end up querying GB/TB of data just by interacting with a Looker studio report for a couple of minutes.

What you should be doing instead is creating and using partitioned tables that contain the transformed data in your desired format.

Instead of extracting data directly from raw data tables, extract data from partitioned tables.

Instead of connecting your data platforms to raw data tables in BigQuery, connect to partitioned tables.

GA4 BigQuery data is already partitioned by dates.

But that may still not be enough (depending upon your website traffic), and you may need to do further partitioning like row-based partitioning (partitioning based on the values of one or more columns) or expression-based partitioning (partitioning based on criteria) to reduce the size of your queries and the data processing cost.

The following are the key benefits of using partitioned tables:

- Cost Savings – You will query less data, which translates to lower costs.

- Performance – Querying partitioned tables can be faster because you scan less data.

- Flexibility – You can maintain a high level of detail in your data but only query the specifics you need.

Summary:

Extract: Take the raw data from its source. In this case, this would be GA4 data.

Transform: Before loading this data elsewhere, transform it within BigQuery. This could include:

- Aggregating data so there’s less of it to query (e.g., summing up daily to monthly metrics).

- Filtering out unnecessary data.

- Using partitioned tables.

- Row-based partitioning based on certain columns’ values.

- Expression-based partitioning based on specific criteria.

Load: Once the data has been transformed and partitioned, load it into your desired platform (Looker, Google Sheets, etc.).

Related Articles:

- Tracking Pages With No Traffic in GA4 BigQuery.

- First User Primary Channel Group in GA4 BigQuery

- How to handle empty fields in GA4 BigQuery.

- Extracting GA4 User Properties in BigQuery.

- Calculating New vs Returning GA4 Users in BigQuery.

- How to access BigQuery Public Data Sets.

- How to access GA4 Sample Data in BigQuery.

- Understanding engagement_time_msec in GA4 BigQuery.

- GA4 BigQuery Attribution Tutorial.

- How to backfill GA4 data in BigQuery.

- How to send data from Google Search Console to BigQuery.

- Google Advanced Consent Mode and GA4 BigQuery Export.

- Google Analytics 4 BigQuery Tutorial for Beginners to Advanced.

- Prompt Engineering for GA4 BigQuery SQL Generation.

- How to create a new BigQuery project.

- How to create a new Google Cloud Platform account.

- How to overcome GA4 BigQuery Export limit.

- BigQuery Cost Optimization Best Practices.

- event_timestamp vs user_first_touch_timestamp GA4 BigQuery.

- GA4 BigQuery Video Tracking Report.